From Babylon* to Babbage

2600 BCE - 1838

The day the world first heard of a computer

The invention of the computer wasn't the result of many small, random, unrelated little steps but of one broad stroke of genius from Charles Babbage, the man who invented it all in just a few months.

Babbage's initial aim was to design a mechanical calculator to make books of mathematical tables automatically.

After more than ten years and still a long way to go, he regrouped and rethought his entire project. What came out of this exercise was the concept of the undisputed uber-ruler of our 21st century: the programmable digital computer.

On the night of December 15th, 1834, Babbage met with Lady Byron, her daughter Ada Lovelace and Mary Somerville.

What makes this social soirée exceptional, is that these three ladies were the first humans ever to hear of Babbage's recent intellectual epiphany.

Babbage spoke of his invention of a brand new engine (the name he used for his automatic calculators), never before imagined, and which was to change our world forever.

Without any specific names or details, but with insights easily understandable 180 years later, Babbage said that with this invention, he was

throwing a bridge from the known to the unknown

that his breakthrough

made him feel he was standing on a mountain peak and watching mist in a valley below start to disperse, revealing a glimpse of a river whose course he could not follow, but which he knew would be bound to leave the valley somewhere

The valley he spoke of had been carved-out by the gloom and decay of centuries of dark ages. For the first time he could see an escape, his machine represented a river of knowledge, science and discovery which would undoubtedly flow out of it.

He fully developed his ideas until 1838, by which time he had designed the first practical computer (architecture and software algorithms). Babbage called his theoretical computer an Analytical Engine.

It took a hundred years to make "Babbage's dream come true"[1] when a very small IBM finished, in 1943, his mathematical tables making machine as a gift to Harvard University.

Reference [1]

When exactly did Babbage have his vision?

Babbage kept his ideas and drawings together in what became a multi-volume logbook but there isn't an entry that shows a big eureka! with a date next to it.

Instead we have a letter that he wrote to a Belgian friend and that was read to the Royal Academy of Science in Brussel in early May of 1835:

During the last six months I have been contriving another engine of far greater power. I have given up all other pursuits and am making drawings of it and advance rapidly but it is most improbable that it will be executed here. I am myself astonished at the powers I have given it. A year ago I would not have thought possible.

Six months before would put his epiphany around the beginning of November 1834, a bit more than a month before his meeting with Lady Byron.

Quick history to Babbage's invention of the computer

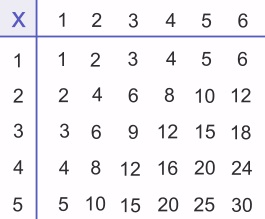

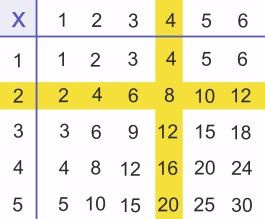

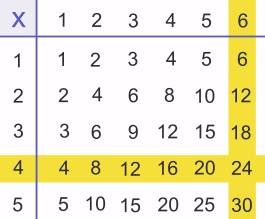

Multiplication Table

2 x 4 = ?

Multiplication Table

2 x 4 = ?

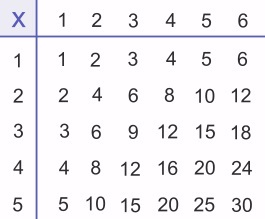

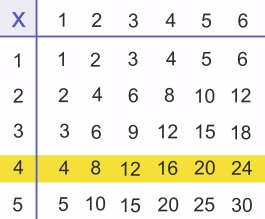

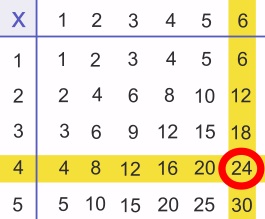

Multiplication Table

4 x 6 = ?

Multiplication Table

4 x 6 = ?

The concept of the computer came from the desire to compute and print books of mathematical tables automatically.

Mathematical tables were two-dimensional arrays designed to simplify manual computations.

Used for millennia, they only became obsolete in the late 1970s after the digital revolution really took off. The history of their making can be divided into two very distinct time periods.

Initially and for more than four thousand years, they were error free and easy to compute because the world could only be described with, at most, Euclidean geometry.

Mathematicians didn't need more than a few simple ones like multiplication tables, tables of square... to help them with their work.

In the middle of the renaissance, two separate inventions made their construction a lot more difficult.

First, John Napier introduced a set of functions that turned:

- multiplications into additions and

- divisions into subtractions.

He called these functions logarithms.

To multiply two numbers only required three lookups in a table of logarithms and one addition. Similarly, to divide two numbers only required three lookups in a table of logarithms and one subtraction.

This was sensational, it was one level of complexity down for the scientists that used them, but it was one level of complexity up for the human computers that made them.

On top of that, about twenty years later, René Descartes introduced his cartesian coordinates, laying the foundations for analytic geometry.

Analytic geometry gave us the tools to study the trajectories of cannon balls, the heavenly motions of planets... the entire behavior of the physical world, really, but all of this required much more complex mathematical calculations.

As a result, mathematical tables of a much higher degree of sophistication were created,

but they were totally unreliable, sprinkled with errors,

mostly because they were compiled by human computers (hence the name of the machines that will eventually replace them for the job).

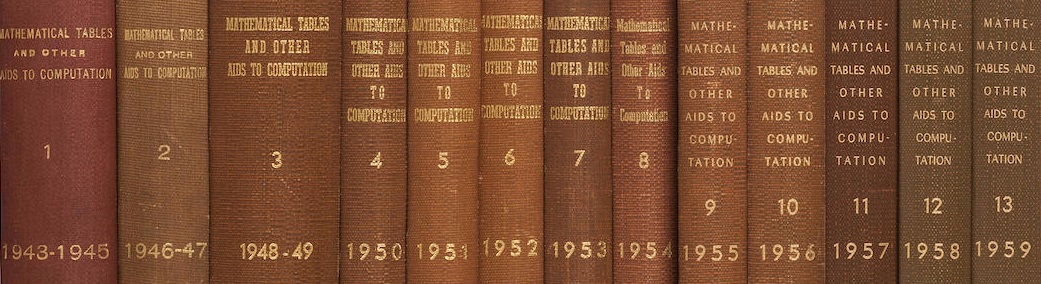

Mathematical tables were so important that they got their own quarterly journal named Mathematical Tables and Aids to Computation (MTAC) during and after the Second World War. MTAC was published from 1943 to 1959.

To remove this human liability to err, a Victorian era British inventor, Charles Babbage, developed machinery to make mathematical tables automatically, without human involvement. He came up with two designs in his lifetime,

- the first one was not programmable,

- but the second one had "many of the salient features of the modern computer".[1]

Babbage called his theoretical computer an Analytical Engine since it could compute and print the mathematical tables of any kind of function that resulted from the use of analytic geometry.

This meant that the entire behavior of our physical world had the potential of being studied with the help of a machine!

Realizing the scope of his invention Babbage spent the rest of his life designing it but, without the possibility of using electrical components, he was unable to build it. Its unfinished drawings described a multi-ton mechanical monster that was to be animated by a steam engine.

Babbage also wrote many programs of various complexity to demonstrate the flexibility of his machine.[2] The most important of these programs was a demonstration of the use of software loops.

Ada Lovelace, first independent programmer

With very little interest at home for his work,

in 1840 Babbage went to Turin, Italy, to give a paper on the Analytical Engine. In the audience was L. F. Menabrea, a scientist and a general... Menabrea was fascinated by Babbage's notion and published a detailed description of the talk in 1842.

Ada Lovelace. By correcting a program written by Babbage she became the first programmer and the only one for one hundred year.

Lady Lovelace, a friend of Babbage's and the only person that really understood the Analytical Engine decided to translate Menabrea's French article into English which became Sketch of The Analytical Engine.

What makes this translation famous is that she added a lot of notes to better explain what Menabrea was talking about, in fact her notes were longer than the original article.

Menabrea showed in his article a few lists of instructions to demonstrate the use of commands in software which were copied verbatim from Babbage's expose.

But one of Lovelace's notes, described an algorithm to compute and print successive numbers of Bernoulli which would turn out to be the only program that used a true algorithm (a software loop) published in the 19th century, in fact, it would be the only real program published in one hundred years since the next one required the existence of IBM's Analytical Engine: the ASCC in the early 1940s.

We know that

in the period 1836 to 1840 Babbage devised about 50 user level programs for the Analytical Engine. Many of these were relatively simple... others allow[ed for] the solution of simultaneous equations

but none were published besides the ones contained in Menabrea's article and Lovelace notes.

In his memoires, Babbage tells us that he and Lady Lovelace had discussed what examples should be included in her notes and that she had chosen and written all of them except for the program

relating to the numbers of Bernoulli, which I [Babbage] had offered to do to save Lady Lovelace the trouble. This she sent back to me for an amendment, having detected a grave mistake which I had made in the process.

It is obvious that Babbage, the inventor of a programmable machine, would have written a lot of programs for it, in his head, on paper... But Ada Lovelace corrected the master, she detected a grave mistake; she was the only person around him that fully understood the concept of the Analytical Engine, the only person who saw its full potential and knew how to program it. This makes her the first independent programmer, the first programmer, really.

She also knew that the Analytical Engine was a lot more than an automatic calculating machine and, for instance, she truly believed that:

the engine might compose elaborate and scientific pieces of music of any degree of complexity or extent.

Remember that this was written in 1842.

David Cope, a composer, scientist, and professor of music at the University of California, Santa Cruz, started to write EMI (Experiments in Musical Intelligence), a computer program designed to compose original music a hundred and forty years later, in 1982.

By 1997, EMI fooled an audience into thinking that the piece it had created was an original Bach, and they had to choose between its work and an original piece from Bach himself!

Bravo David Cope! Bravo Lady Lovelace!

Was Babbage's Analytical Engine a real computer?

Yes, absolutely, even though it was only theoretical, it wasn't missing one thing and, besides using a mechanical design, everything else was there:

It could be programmed by the use of punched cards. It had a separate 'memory' and 'central processor'. It was capable of 'looping' ... as well as conditional branching... It incorporated 'microprogramming' as well as 'pipelining' (the preparation of a result in advance of its need)... It also featured a range of input and output devices, including graph plotters, printers, and card readers and punches. In short, Babbage had designed what we would now call a general-purpose digital computing engine.

This description represents all of the salient features of a computer. Of course the technology used by IBM was different, it went from Babbage's improbable mechanical construction to their successful electromechanical design, but it doesn't take away the fact that his Analytical Engine was the model from which IBM built the first computer, the ASCC.

What was the difference in between Babbage's calculating engines?

It is important to note that Babbage would not have invented the computer if he hadn't spent more than 10 years (1822-1834) trying to build his first machine that he called a Difference Engine. It was a necessary first step.

The Difference Engine was to be a mechanical wonder never seen before, but totally inflexible, where any change to its behavior required a physical change to its hardware. It "was massively larger than anything that had been done before... The design called for some 25,000 separate parts, equally split between the calculating section and the printer."[14]

It is the synthesis of more than ten years spent at:

- designing his first engine

- of visiting factories to learn of the latest manufacturing practices

- of looking at how other makers of mathematical tables had handled the challenge

- of a falling-out with the constructor of the Difference Engine

that gave him the idea for his second engine.

The Difference Engine was a 'number cruncher' composed of a mechanical calculator and a bunch of printers. The Analytical Engine was designed from the start to be a 'program reader' that operated a mechanical calculator. With the Analytical Engine the machine never changes, it is simple, basic, and yet it is universal for its ability to run an infinite variety of programs.

The Analytical Engine was designed to behave like a conductor reading a music sheet made of instructions, one at a time, in sequence. These instructions made it juggle numbers, with the help of a logical machine, in between many input and output devices, a four function mechanical calculator and a memory bank, which is exactly what a modern computer does.

Babbage described his engine as eating its own tail, because it used everything that had been computed before to perform the current operation, modifying the machine ever slightly for the next operation.

Without a program to execute a computer is nothing more than an expensive door stop, it does not know what to do.

A computer is so basic: a simple calculator flanked with an even simpler logical machine and yet it seems alive, animated. It is the cleverness of its programs that make it clever, thinking and strategy come from its programs and programmers. Just like a CD player, a pile of electronics that can melt your heart by playing the most beautiful music, the computer is nothing more than a pile of electronics that can emulate and amplify the most profound thoughts of the people that design its programs.

References

[1] A. Hyman, Charles Babbage: Pioneer of the Computer, p. 166 (1985)

[2] Allan G. Bromley, Inside the world's first computer, New Scientist, Volume 99, No 1375, p. 784 (1983)

[3] Charles Babbage, Passages from the life of a philosopher, p. 136 (1864)

[4] Charles & Ray Eames, A Computer Perspective, p. 49 (1973)

[5] M. Wilkes, The Radio and Electronic Engineer, Volume 45, No 7, p. 332 (July 1975)

[6] BINAC (USA), Manchester Mark I (UK), EDSAC (UK), CSIRAC (Australia)

[7] I. Bernard Cohen, Howard Aiken, Portrait of a Computer Pioneer, p. 38 (1999)

[8] Ibid. pp 66-67

[9] Edited by Brian Randell, The Origins of Digital Computers, Selected Papers, pp. 187-206 (1973)

[10] Edited by Henry Prevost Babbage, Babbage Calculating Engines, Ada Lovelace, Sketch of the Analytical Engine, p. 23 (1889) reprint by Charles Babbage Institute

[11] R. Ligonnière, Préhistoire et Histoire des Ordinateurs, p. 242 (1987)

[12] M. Wilkes, Automatic Digital Computers, p. 20 (1956)

[14] Doron Swade, The Difference Engine, Charles Babbage and the Quest to Build the First Computer, p. 48 (2001)

(*)

I am using the town of Babylon instead of the town of Shuruppak even though it is where the oldest mathematical table ever discovered was found.

This table of squares was written on a clay tablet and was dated to around 2600 BCE. The thing is that Babylon was only created around 2300 BCE, three hundred years after this tablet was inscribed, but Babylon sounds so much better than Shuruppak and they are not too far apart; so I've decided to use Babylon in the title.

Version —β 9.3.2—

Charles Babbage (1791-1871)